How to Measure Training ROI Effectively

How to Measure Training ROI Effectively

Organisations incur trillions of dollars on training and development annually all over the world. But a disproportionately low percentage of such organisations can respond to a question that appears so easy-to-answer: did it work? Training ROI measurement to-date is one of the most talked and least practiced in the profession by many L&D managers, HR professionals and finance business partners. The distance between intention and action is not brought about by lack of interest – it is brought about by lack of method, data infrastructure, and organisational will to bridge the gap between learning and performance.

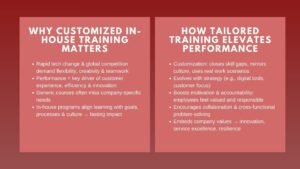

The antebellum is increasing. With organisations experiencing stricter budgets and more attention on the discretionary spend, training programmes with no ability to demonstrate their value are becoming more susceptible to cuts. This applies regardless of whether the programme at issue is a technical upskilling programme, a masterclass in corporate finance, to high-potential analysts, or a compliance refreshers of an operational team. The skill of measuring what training will bring – in business reality – is rapidly becoming a competency in any learning programme designer or approver.

This article is a useful accessible training manual on measuring ROI to junior to mid-level professionals. It encompasses the frameworks that are important, the processes that are effective and the errors that organisations tend to make. It is based on actual cases to demonstrate what good measurement is and what occurs when it does not exist. The principles herein will make good ground whether you are embarking on your initial training budget discussion or attempting to create a more stringent evaluation culture within your organisation.

Understanding the Foundations: Why ROI Measurement Is So Often Skipped

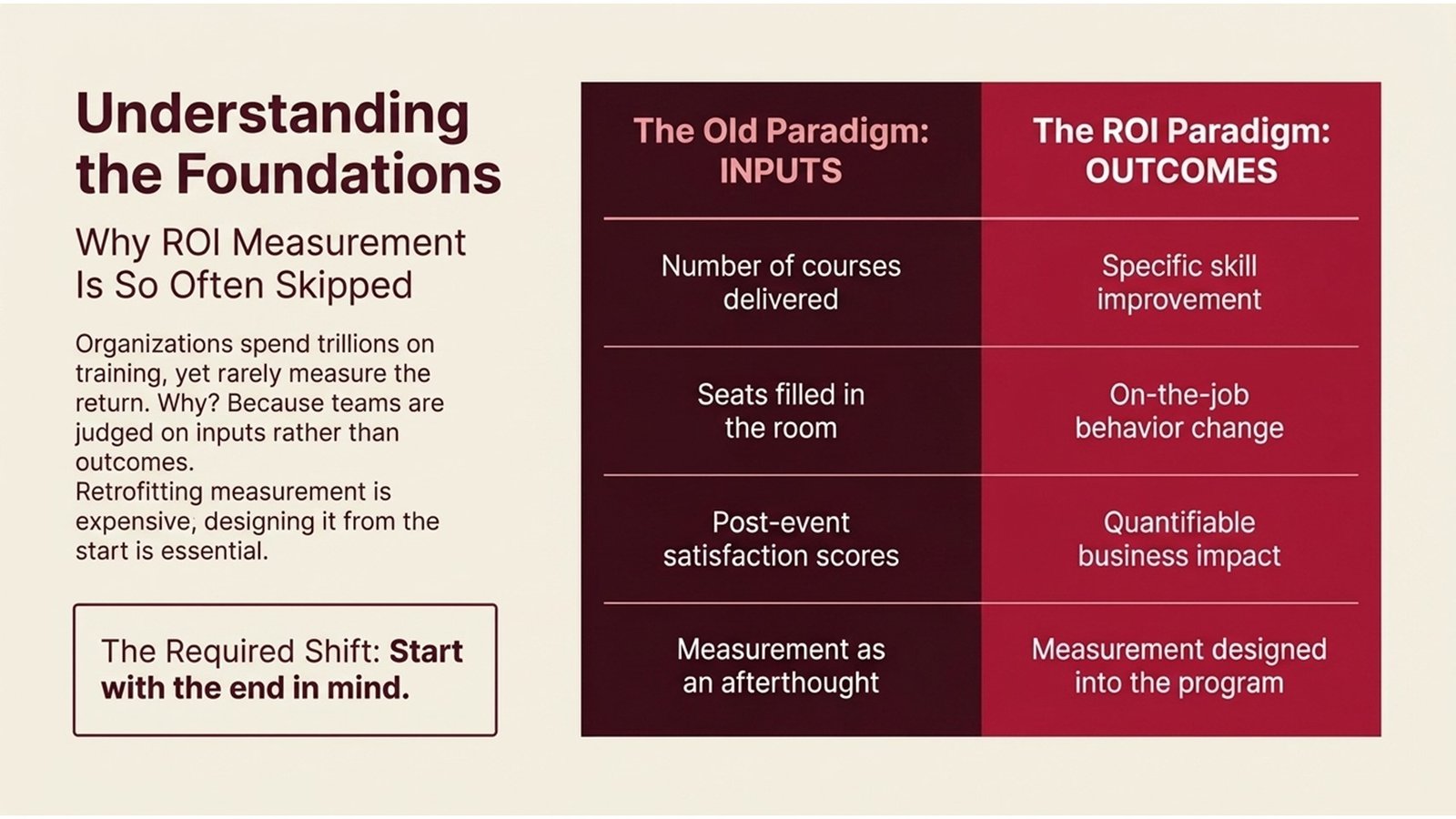

Different L&D professionals with experience will have a standard list of responses to why their organisation does not measure training ROI. It is too time consuming. The information is difficult to determinize. The top management does not request that. Such reasons can be explained, yet to a great extent, they are self-reinforcing. Programme design does not include measurement when it is not anticipated. It would be costly to retrofit when not part of the design. And when retrofitting is costly, it is simply removed altogether – and the organisation no better informed as to whether its training expenditure is yielding any benefit.

The underlying problem is that most training teams in the past have been evaluated based on inputs and not results. The amount of courses offered, amount of seats sold, satisfaction scores of post-event surveys – these are the measures that are likely to get into annual reports. They are simple to gather and are almost aesthetically pleasing. But they tell us little whether there was an improvement in skills, whether there was a change in behaviour at the job or whether the business improved. The measurement of ROI of training skills needs another type of discipline: the one that has the end in mind and develops the assessment into the programme at the first steps.

It is also prudent to note that there are certain forms of training which are indeed more difficult to quantify than others. Real value is generated by soft skills programmes, culture change initiatives and wellbeing training, but the value is diffuse and long-dated. Technical training e.g. a corporate finance masterclass, available to finance professionals, is easier to measure as the skill is specific, the measurement of the performance is measurable and the time to payback is relatively short. The concepts of ROI measurement are applicable in both fields, yet the practitioners must adjust their expectations to these.

Table 1: The Kirkpatrick Model — A Foundation for Training Evaluation

| Level | What It Measures | Data Source | Typical Metric |

| 1 – Reaction | Relevance and satisfaction by the learners. | Post-training survey | Average satisfaction score (1–5) |

| 2 – Learning | Skill and knowledge acquired. | Pre/post assessments | % improvement in test scores |

| 3 – Behaviour | Practical use of skills. | Manager observation, 360 feedback | Behaviour change frequency |

| 4 – Results | Business payback of training. | KPIs, revenue, error rates | Reduction of costs, increase in productivity. |

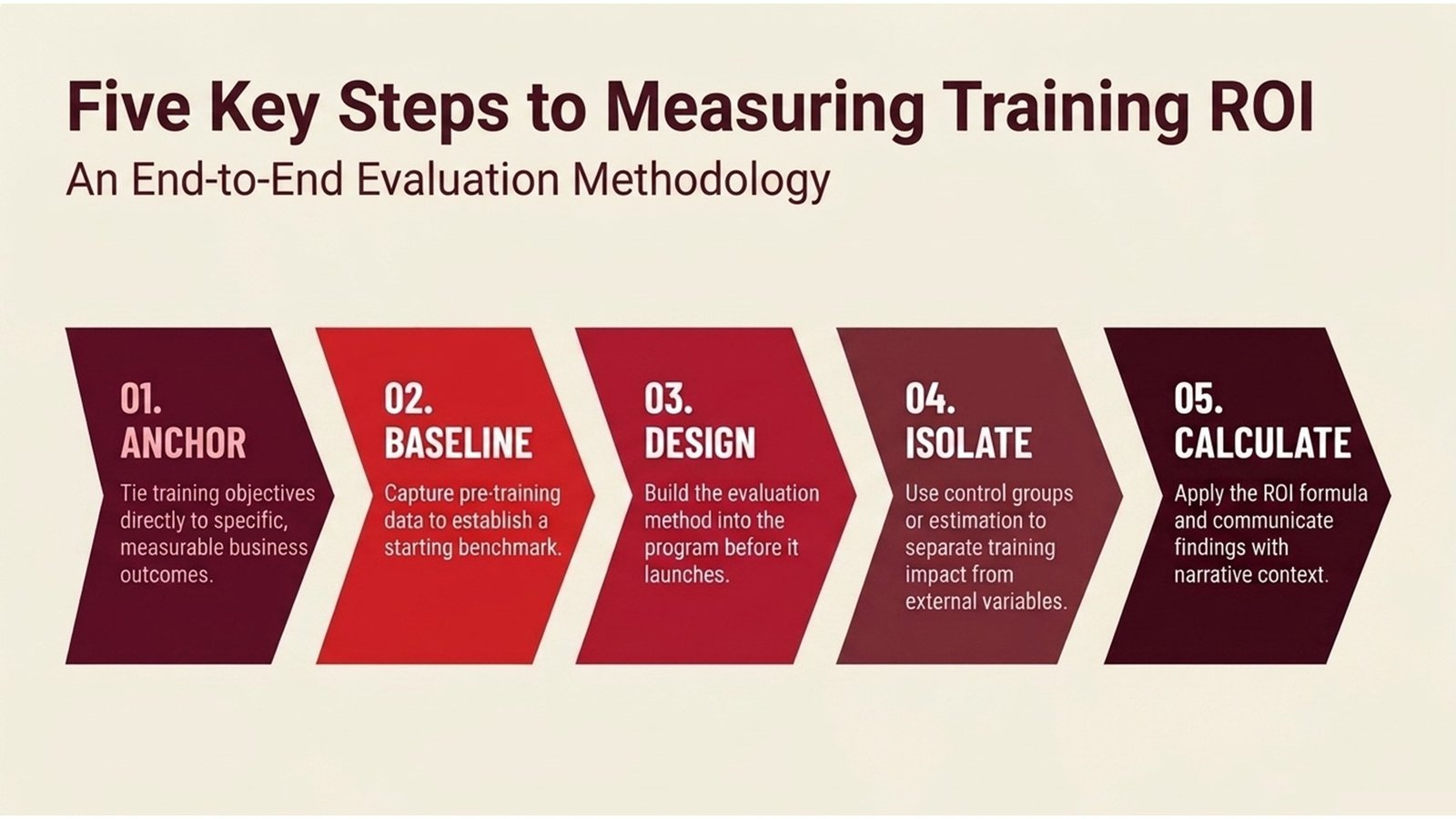

Five Key Steps to Measuring Training ROI

Good training ROI measurement is not a one-off calculation which is done at the culmination of a programme. It is a process that is end-to-end and starts long before training is designed up to long after delivery. The five steps that follow are the fundamental disciplines which organisations with well developed measurement practices always practice.

Step 1: Anchor training objectives to business outcomes. The most general explanation as to why the ROI of training is not calculable is that the programme was not originally linked to a business objective. The team that is to design any form of content should be able to explain what business issue the training is addressing beforehand. Is it decreasing financial reporting mistakes? Improving customer retention? Speeding up the time taken by new analysts to full productivity? The key to significant ROI measurement is specific, measurable business objectives.

Step 2: Establish a baseline before training begins. Improvement is impossible to gauge without the beginning point. The pre-training data assessment scores, KPI snapshots, error rates, productivity rates, etc. form the baseline against which post-training performance can be analyzed. This step is usually omitted since it involves the co-ordination between L&D and the line management but it is not negotiable to the calculation of credible ROI. A merely pre-assessment and manager rating scale can offer a justifiable base line.

Step 3: Design evaluation into the programme, not onto it. The four levels of the Kirkpatrick model (reaction, learning, behaviour, and results) need to have a data collection method at the design stage each. Level 1 data are based on post training surveys. Level 2 data is based on assessments. Level 3 data involves following up with the participants and their managers in a structured manner and normally 30-90 days after the training. Level 4 data has to be able to access business KPIs. Considering the way every level will be measured prior to delivery will save much labor in the future and yield much more useful data.

Step 4: Isolate the contribution of training from other variables. This is the most technical part, and that which makes the difference between rigorous ROI analysis and anecdote. Performance in business alters on numerous reasons – market conditions, new leadership, seasonal, changes in pricing. Organisations must control these other factors in order to say that training resulted in an improvement. The simplest approach is to employ a control group: a similar team that failed to go through the training. Where this cannot be done, it may be statistically adjusted or expertly estimated, but with reasonable caveats.

Step 5: Calculate, communicate, and act on the findings. The formula ROI – (net programme benefits – programme costs)/(programme costs) x 100 returns a percentage rate which is directly comparable to other investments. However, the number is seldom enough to make decisions. Best ROI reports include the top-line number with a story behind the number, classification of benefits and an admission of the assumptions that were used to calculate the number. Companies which do this, will always find that the training budgets are not subject to much scrutiny since they have a history of accountability.

Process Flow 1: Six-Phase ROI Measurement Process

| Phase | Activity | Key Question | Output |

| 1. Plan | Establish training goals and metrics of success. | What does success entail in business terms? | Measurement framework |

| 2. Baseline | Gather pre-training performance information. | What is the position of learners prior to the training? | Baseline KPI snapshot |

| 3. Deliver | Train running data and engage log data. | Are participants interacting as desired? | Records on attendance and completion. |

| 4. Evaluate | Measure Level 1-4 and run assessments. | Was there any change in learning and behaviour? | Assessment reports per grade. |

| 5. Isolate | The adjustment of external factors with comparison groups. | To what extent is the change due to training? | Adjusted benefit estimate |

| 6. Calculate | Calculate ROI equation and present results. | Is the training delivering measurable value? | Narrative report and percent ROI. |

Real Cases: What Measurement Looks Like in Practice

A good example of rigorous measurement of ROI of training is a pharmaceutical firm in Europe, which invested in a structured capability-building programme of their procurement and supply chain teams. The company had already witnessed a trend of expensive supplier bargaining breakages and wished to establish business expertise in a group of say 150 middle managers. Prior to the rollout of the programme, the L&D department collaborated with finance to come up with a baseline of the average contract value, the average time of the negotiation cycle and capture rates of supplier rebates. These were the Level 4 metrics based on which the programme was finally going to be evaluated.

After one year of the programme, the company compared these measures to an equal group of managers in a different region that had not received the training yet. The trained group had an increase of 22 percent in rebate capture and a 15 percent decrease in the time of the negotiation cycle. When costs of the programme were factored in (facilitator fees, materials, and the cost of the time lost by the participants at their desks) the ROI computed was 310%. The outcome was strong enough to have the programme extended to three more regions in the next financial year and the measurement framework was already in place to roll out the programme.

A second example is an Australian financial services organisation which conducted a series of corporate finance masterclasses to its high potential analyst group. The company had observed that analysts were the slowest compared to benchmarked financial models to complete on their own. A six-week programme was aimed at the training programme focusing on specific modelling and analysis methods. Pre and post-assessments demonstrated an average improvement of 38% in technical accuracy and manager assessments made 60 days after training confirmed that 72% of the participants were using the new techniques in consistent application in live client engagements. The price per head was estimated as about one-third of the price of external recruitment of equal capabilities – showing the importance of the cost-effective in-house training programs as compared to external recruitment as a talent management strategy.

Table 2: ROI Calculation — Key Inputs and How to Quantify Them

| Input Category | Examples | How to Quantify |

| Training Costs | Facilitator charges, resources, off job. | Actual invoices + salary cost of hours of attendance. |

| Performance Benefits | Increased speed in executing tasks, reduced errors. | Compare pre/post performance KPIs |

| Retention Savings | Less employee turnover post training. | Multiply reduced exits by cost-to-hire estimate |

| Revenue Uplift | More sales, uptake of upsells. | Isolate revenues of training attributable to control group. |

| Risk Reduction | Reduced compliance violations, incidents. | Pre and post annualised cost of incidents. |

Building Cost-Effective Programmes Worth Measuring

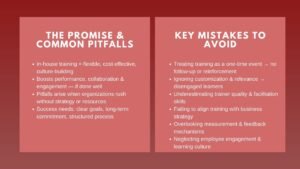

The design of a training programme and its measurability have a meaningful relationship. Well-scoped, business-aligned, and designed to achieve particular learner outcomes are much easier to assess – and are likely to yield a better ROI – than programmes that are planned around what is available in the content or what the trainer prefers. This is one of the main arguments of the cost-effective in-house training programs: when organisations develop training internally they have much more control over the design decisions that define whether it is even possible to measure.

Process Flow 2: Building a Cost-Effective In-House Training Programme

| Step 1 | Step 2 | Step 3 | Step 4 | Step 5 |

| Needs Analysis | Budget Mapping | Programme Design | Pilot & Refine | Scale & Sustain |

| Determine skills gaps that are related to the business objectives. | Assign expenses per component; project savings. | Combine in-house know-how with specific external information. | Test on small cohort; test and correct. | Roll out widely; embed in performance cycle |

Facilitating training by making use of internal subject matter experts is one of the most viable methods of keeping training costs within reasonable limits without compromising the quality. The knowledge required to upskill a group of cohorts is often within the organisation, but it has not been systematised and made available to all as an available source. A corporate finance masterclass, offered by a senior internal CFO or finance director, such as, has both credibility and relevance, which cannot always be achieved by an external vendor. The model of costs also differs fundamentally: the internal facilitation is used instead of a large external vendor fee with a relatively smaller investment in content development and facilitation support.

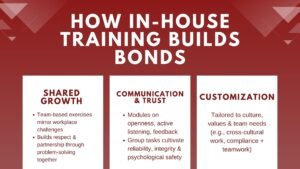

The difficulty with this method is uniformity. Not all internal subject matter experts are good trainers and there can be a wide difference between facilitator to facilitator. Those organisations that have successfully made their way through this have invested in a structured facilitator development process – arming the in-house expertise of the instructional skills of design and delivery that they require to be able to transfer their expertise. This is not only a good investment in the quality of training, but in the reliability of the ensuing evaluation data, because when consistency is present in the delivery of the training, more interpretable results to the learner will be achieved.

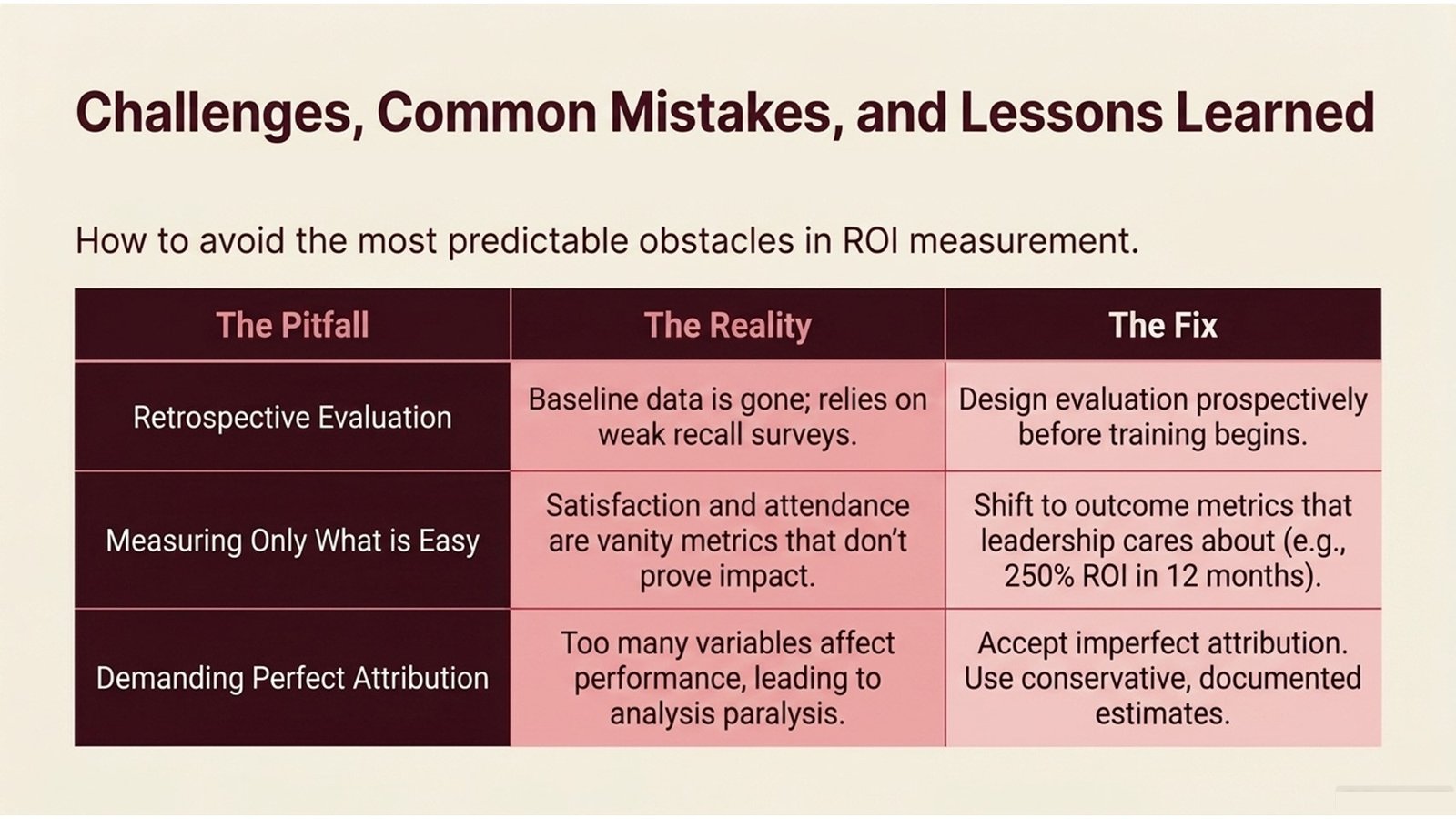

Challenges, Common Mistakes, and Lessons Learned

Despite high intentions in measurement of training ROI, even organisations that are keen to pursue the measurement exercise do not evade foreseeable challenges. Getting familiar with these issues prior to joining is among the most feasible things that a junior or mid-level professional can do to hasten their functionality in this domain. The easiest error to make is to consider measurement as a post hoc. When the evaluation is prepared in retrospect, that is, after the programme is already provided, the base data is lost, the participants have already passed, and the most an evaluator can do is to use his or her recollection surveys and impressions of the managers. These are good things and not as powerful as potential data.

The second error is the tendency to measure the things that are easy to measure and not the things that are important. The training analogs of vanity metrics are completion rates, satisfaction scores, and attendance figures: they inherently appear good and can hardly inform decision-makers about business impact. Those organisations that have moved their evaluation culture to be outcome based metrics rather than input based metrics will always testify that their training discussions with senior leadership will be more strategic and less defensive. When you can show that a cost-effective in-house training programs investment returned 250% within twelve months, you are no longer justifying a budget line, you are now making an argument to the business to invest.

Attribution is a third challenge, especially in the case of individuals in regulated or complex industries. The variables have a multiplier effect on the performance of the analysts in a busy financial services organisation – new systems, team composition changes, market changes. The contribution of a corporate finance masterclass in this environment should be carefully designed to isolate the contribution of the specific masterclass. Among the lessons that the practitioners of evaluation who have gained experience teach at all times is that even imperfect attribution is preferable to none. An estimate of the contribution of training which is conservative, well documented and in fact which admits uncertainty, is more plausible and more useful to decision-makers than a random claim that training was valuable. This is a habit to get into of being clear about assumptions and the training of ROI measurement will become increasingly feasible and convincing with time.

Conclusion: Turning Measurement Into a Competitive Advantage

The argument that ROI of rigorous training should be measured has never been more compelling. The capacity to show that training can bring business value that is measurable is what distinguishes learning functions that expand and those that shrink as organisations encounter increasingly high expectations of accountability by all their functions. In the case of junior to mid-level professionals, building this ability at an early stage in their career – be it in the L&D, HR, or finance department – is a true distinguishing factor that will compound over a career.

It is not merely a cost-saving activity because the need to move towards cost-effective in-house training programs that are designed to focus on business outcomes and assessed in a disciplined manner. It is a redesign of the learning role to the actually value generating work. Once measurement is planned into training, it is practically always better training – more focused, more relevant, and more related to what the participants need to change about the work.

To individuals in the business or nearby the finance and analytics sector, the applicability of a well-designed corporate finance masterclass with rigorous measurement to its own impact is a career development chance and a concept test. The financial thinking disciplines of clear objectives, baseline data, controlled comparison, benefit quantification are directly applicable to the training evaluation practice. With the ability to traverse these two worlds, they will be in great demand as organisations strive to make their learning investments as rigorous and accountable as any other capital allocation decision.

Actionable ideas: Start all training programs by writing down the 2 or 3 business measures you want to change and check them with the involved line manager or finance partner. Gather baseline data prior to the commencement of training, although this may not be perfect. Develop a simple assessment form – incorporating all four Kirkpatrick scales – and use it uniformly throughout programmes. In reporting findings, you must always have a cost breakdown with the amount of the benefit to be sure that the ROI figure will be transparent and believable. And avoid the temptation to over-state: even a more modest mark of 150% ROI will help your own professional reputation more than an unsubstantiated statement of 500%. Well measured is not an administrative cost, but is the basis of a training activity that finds a place at the strategic table.